Proposer

Generates synthetic task-level objectives from documentation and proposer memory, expanding practice toward under-covered environment behavior while avoiding repeated infeasible goals.

Pre-task agent memory construction

PREPING builds reusable procedural memory before deployment, using self-generated synthetic practice to prepare agents for new executable environments before any target-environment task experience is available.

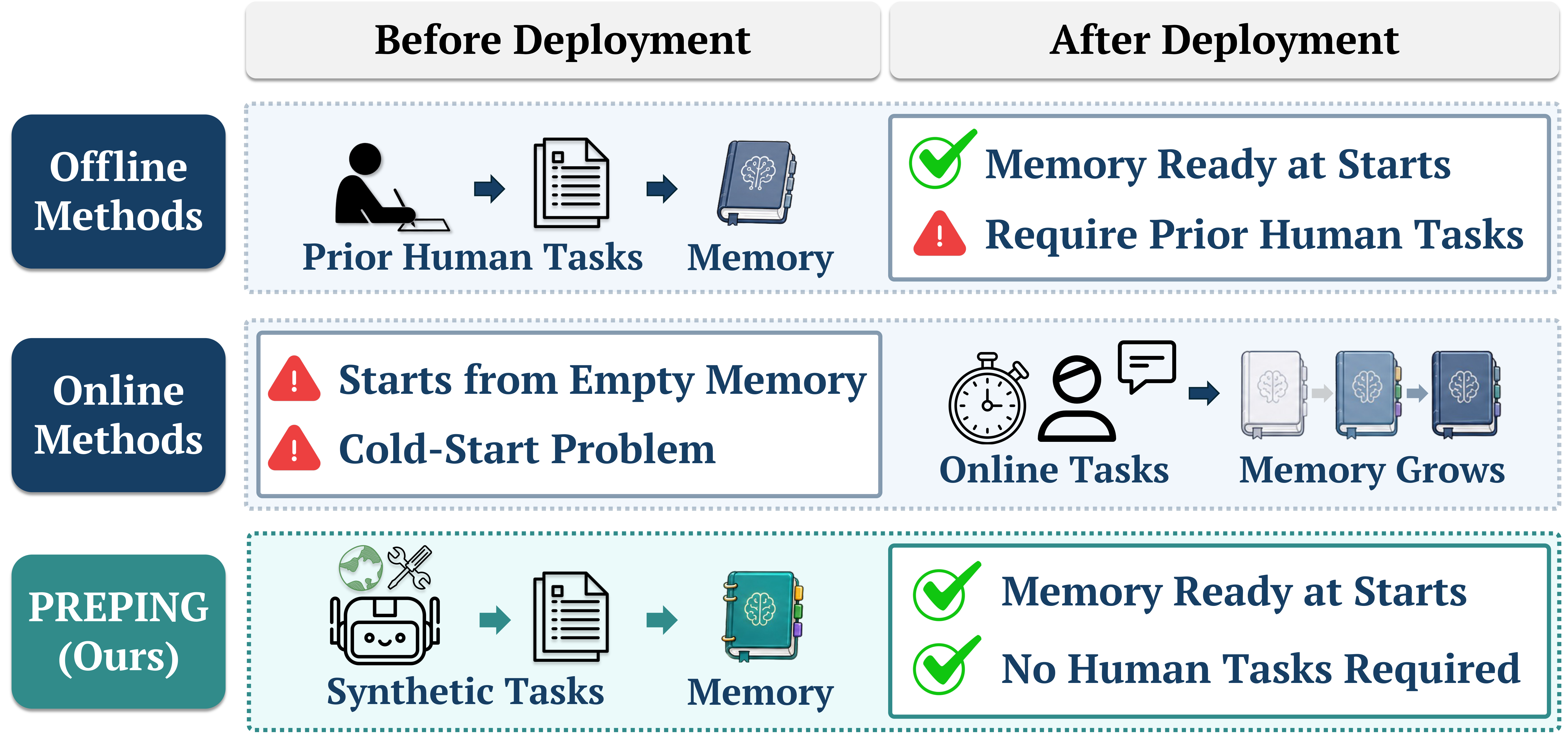

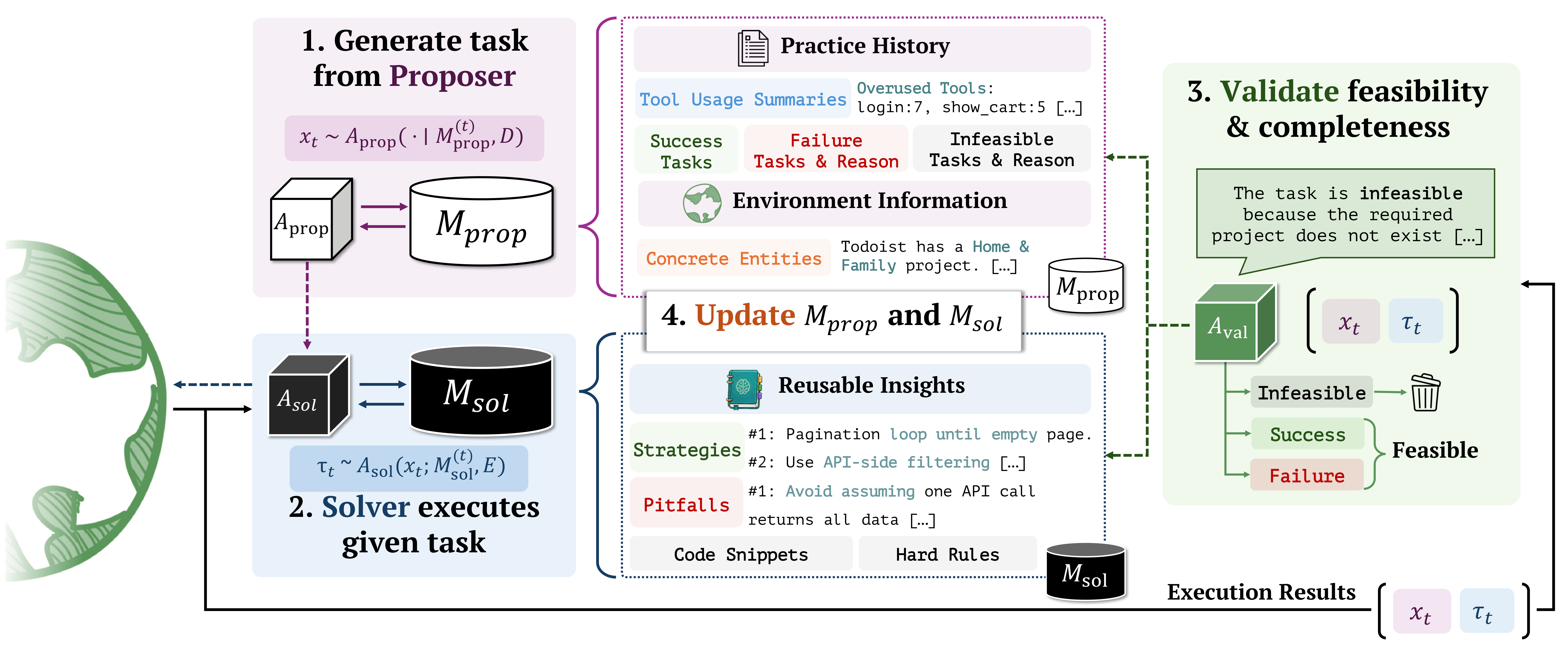

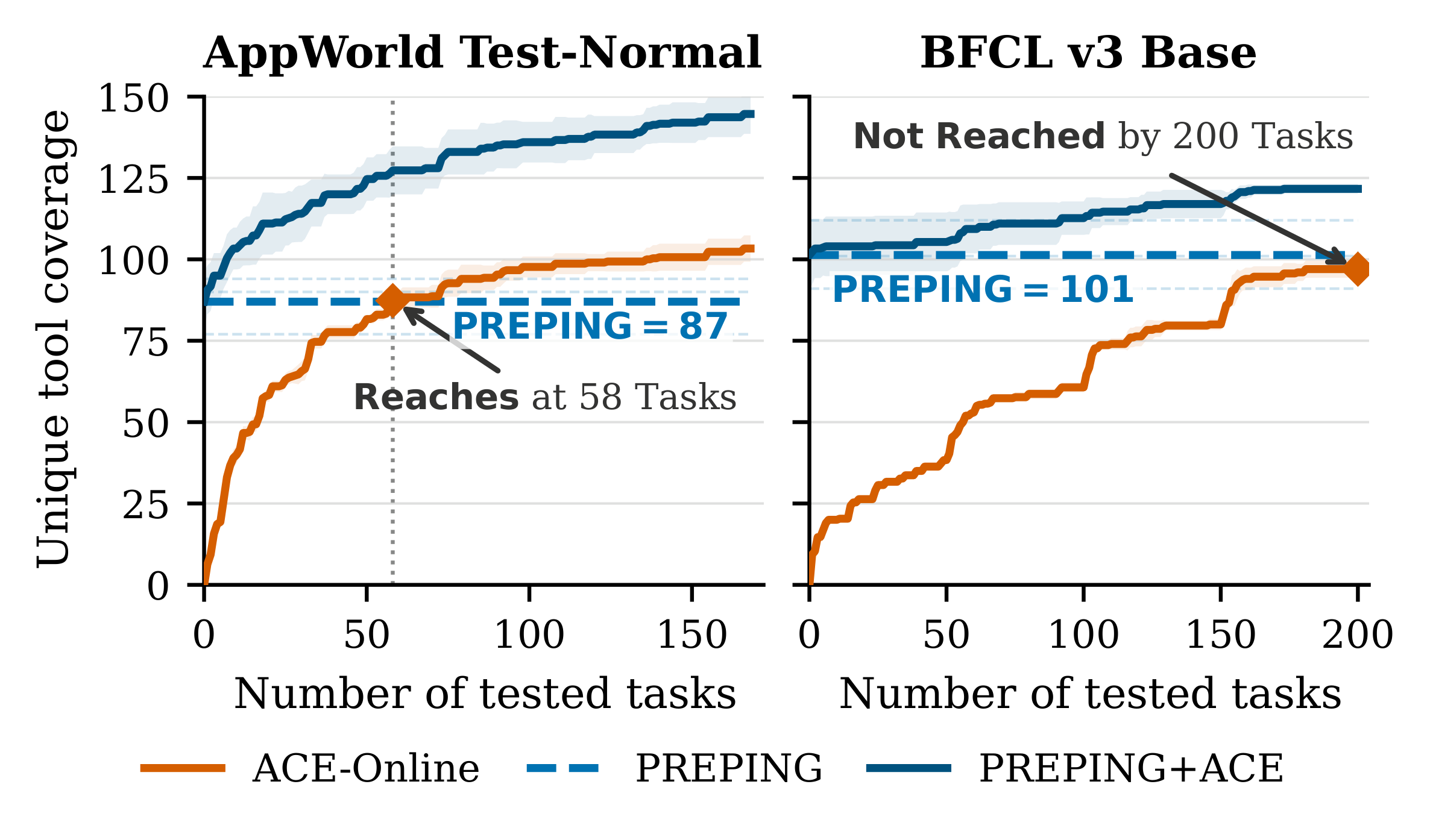

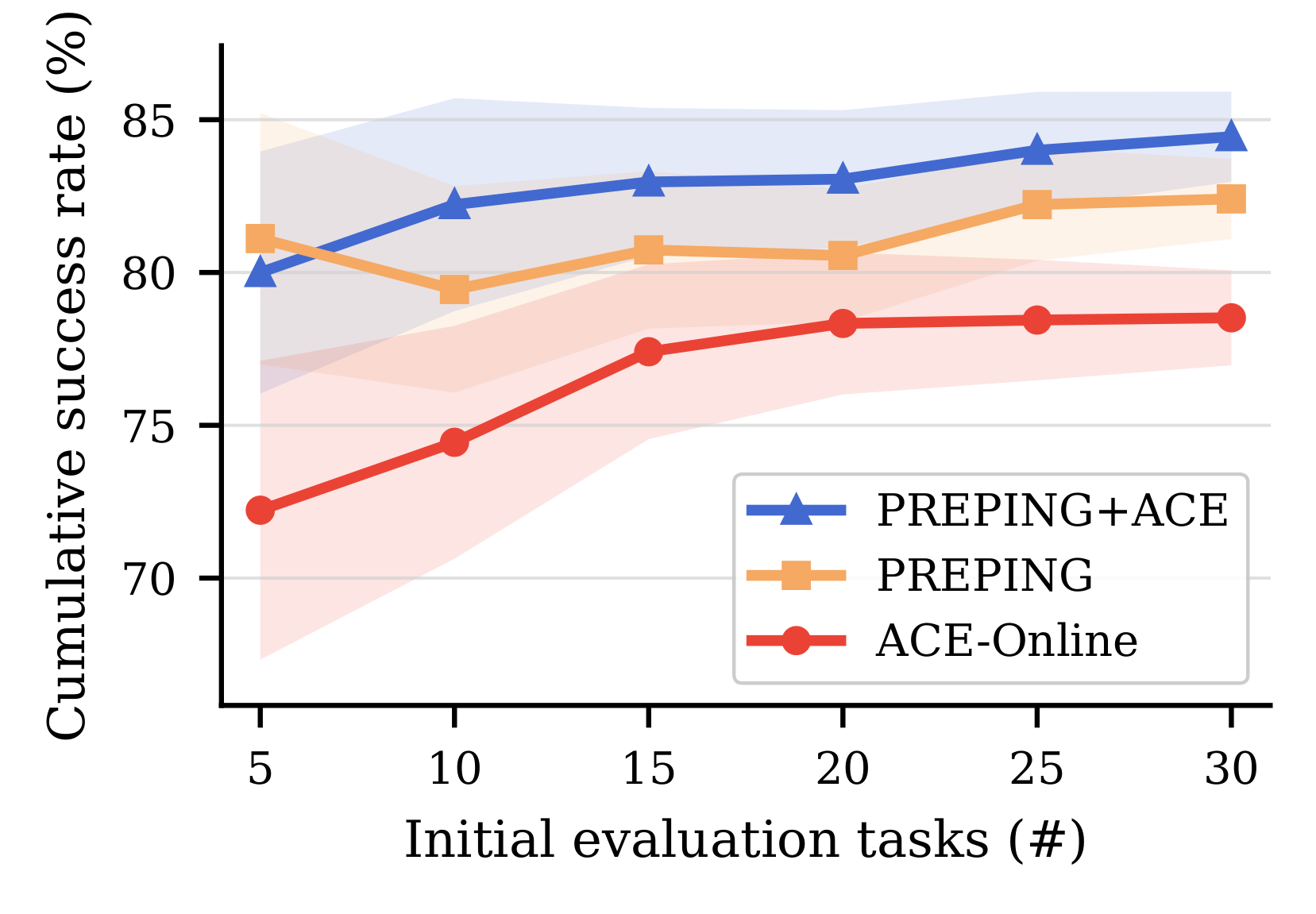

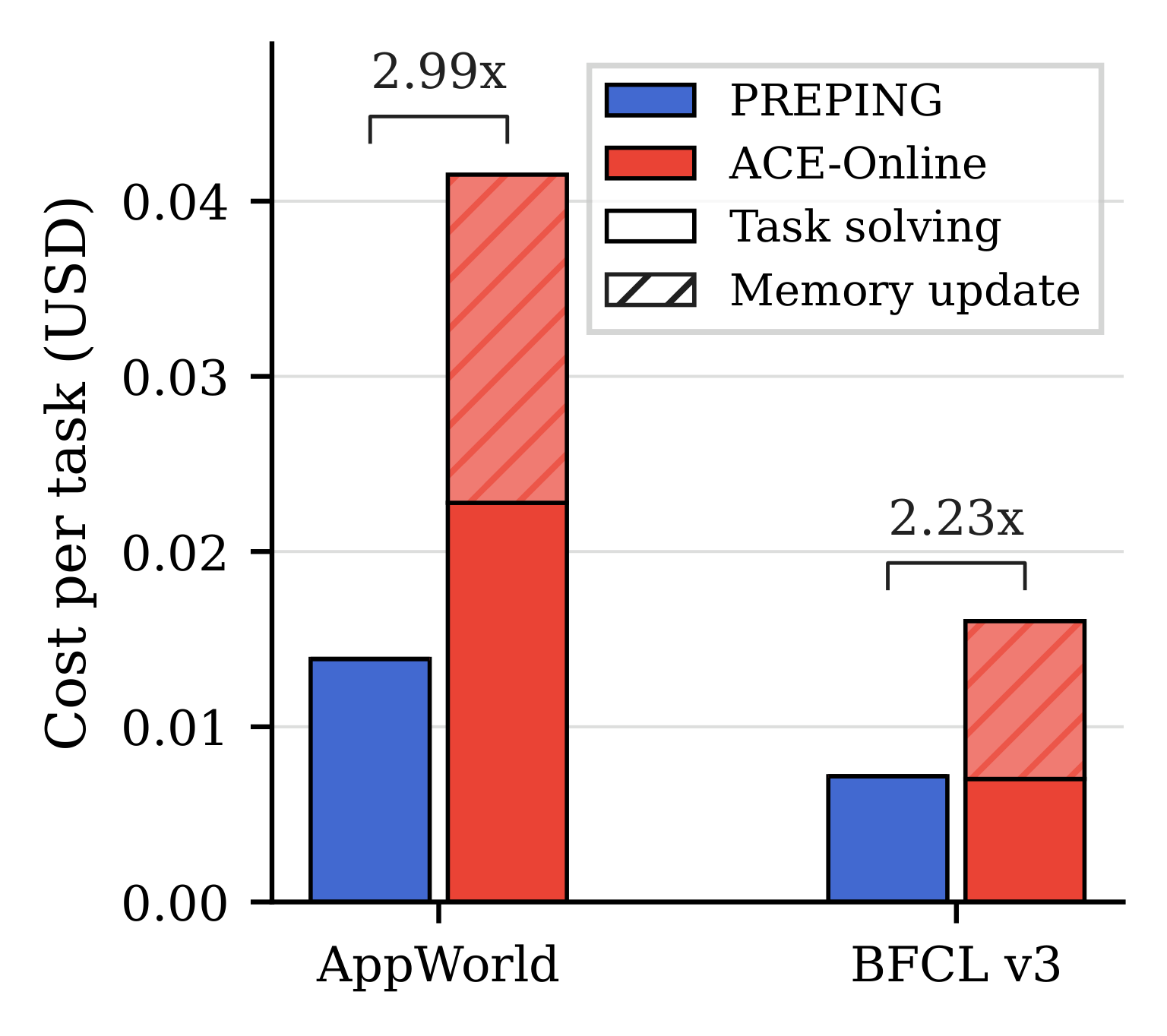

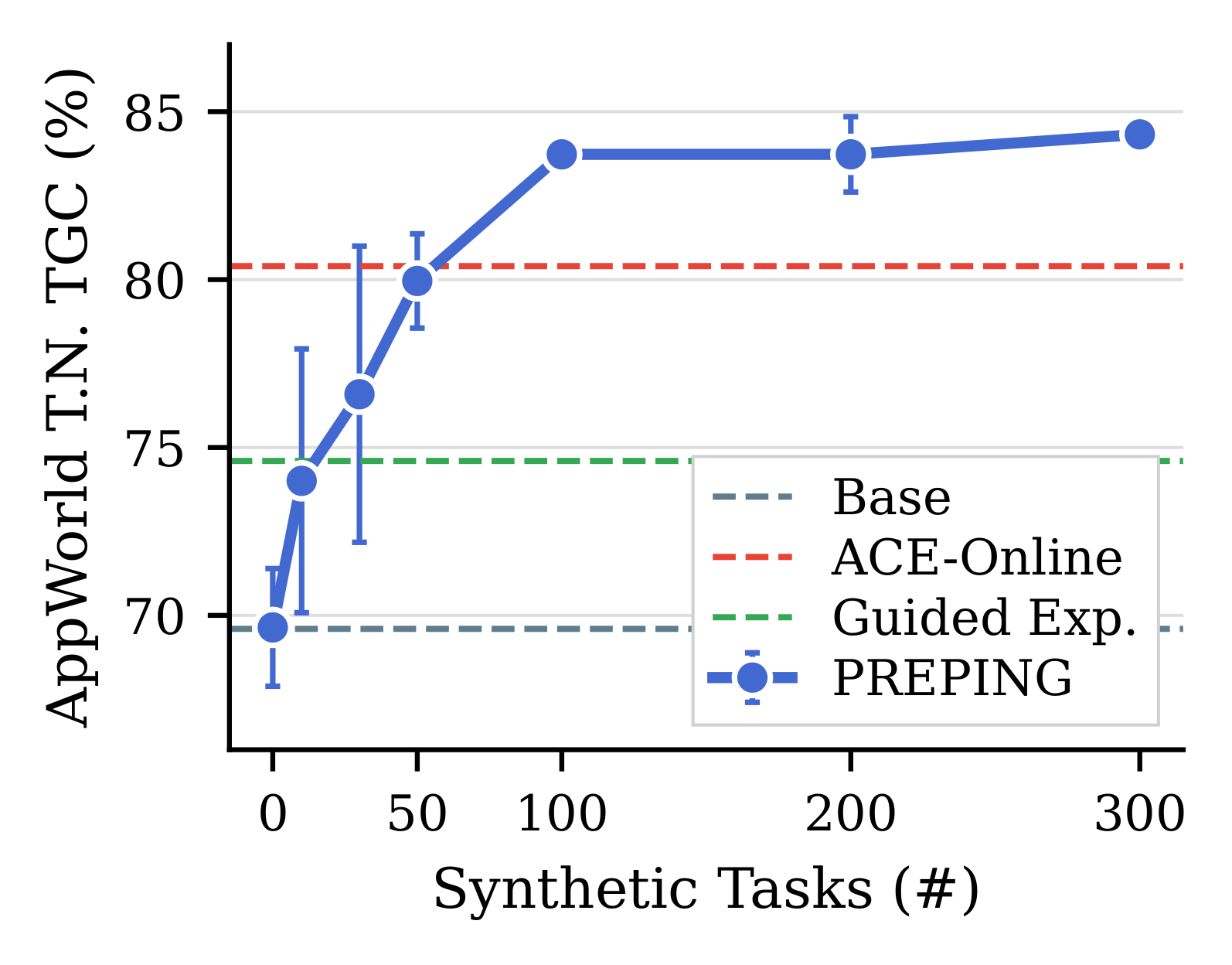

Agent memory is typically constructed either offline from curated demonstrations or online from post-deployment interactions. However, regardless of how it is built, an agent faces a cold-start gap when first introduced to a new environment without any task-specific experience available. In this paper, we study pre-task memory construction: whether an agent can build procedural memory before observing any target-environment tasks, using only self-generated synthetic practice. Yet, synthetic interaction alone is insufficient, as without controlling what to practice and what to store, synthetic tasks become redundant, infeasible, and ultimately uninformative, and memory further degrades quickly due to unfiltered trajectories. To overcome this, we present PREPING, a proposer-guided memory construction framework. At its core is proposer memory, a structured control state that shapes future practice. A Proposer generates synthetic tasks conditioned on this state, a Solver executes them, and a Validator determines which trajectories are eligible for memory insertion while also providing feedback to guide future proposals. Experiments on AppWorld, BFCL v3, and MCP-Universe show that PREPING substantially improves over a no-memory baseline and achieves performance competitive with strong playbook-based methods built from offline or online experience, with deployment cost 2.99x lower on AppWorld and 2.23x lower on BFCL v3 than online memory construction. Further analyses reveal that the main benefit does not come from synthetic volume alone, but from proposer-side control over feasibility, redundancy, and coverage, combined with selective memory updates.

Method

PREPING treats memory construction as two coupled control problems: deciding what to practice before deployment, and deciding what synthetic experience is safe to store.

Generates synthetic task-level objectives from documentation and proposer memory, expanding practice toward under-covered environment behavior while avoiding repeated infeasible goals.

Executes synthetic tasks in the target environment and produces trajectories that expose procedures, preconditions, tool compositions, and failure modes.

Filters task-trajectory pairs for feasibility and completion, admitting only reliable synthetic experience into deployment-facing solver memory.

Results

PREPING improves downstream execution across stateful app workflows, executable function calling, and MCP-server tool use without using target-environment task data during construction.

Online Initialization

Instead of starting ACE-Online from empty memory, PREPING+ACE begins deployment with pre-task memory and continues updating online. This improves performance on both AppWorld and BFCL v3 while preserving the standard online adaptation pathway.

@misc{choi2026preping,

title = {PREPING: Building Agent Memory without Tasks},

author = {Choi, Yumin and Park, Sangwoo and Kang, Minki and Baek, Jinheon and Hwang, Sung Ju},

year = {2026},

eprint = {2605.13880},

archivePrefix = {arXiv},

url = {https://arxiv.org/abs/2605.13880}

}